Weekly Power Outlet US - 2024 - Week 3

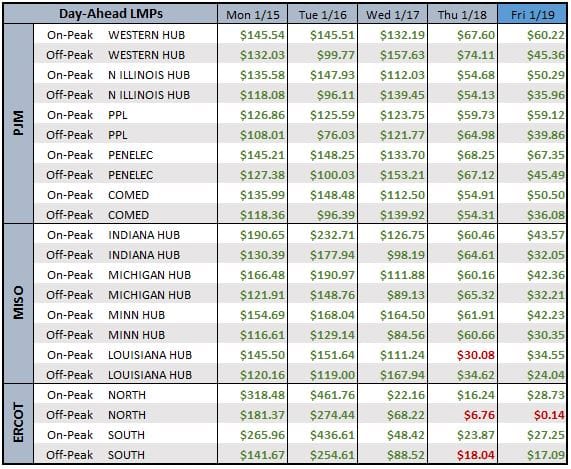

LMPs shot up as winter storms worthy of naming raced across the US and Canada.

Winter Blast, FERC, AI=Load

Energy Market Update - 2024 - Week 3, brought to you by Acumen.

For More Updates Like This, Subscribe Here!

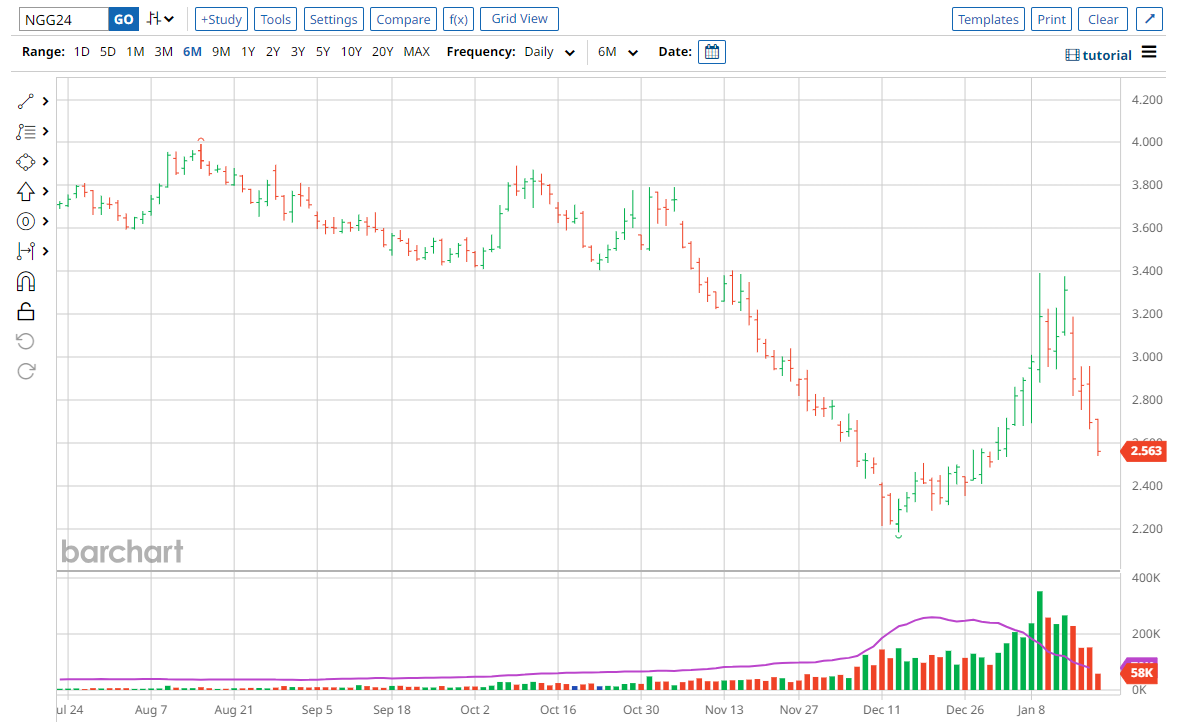

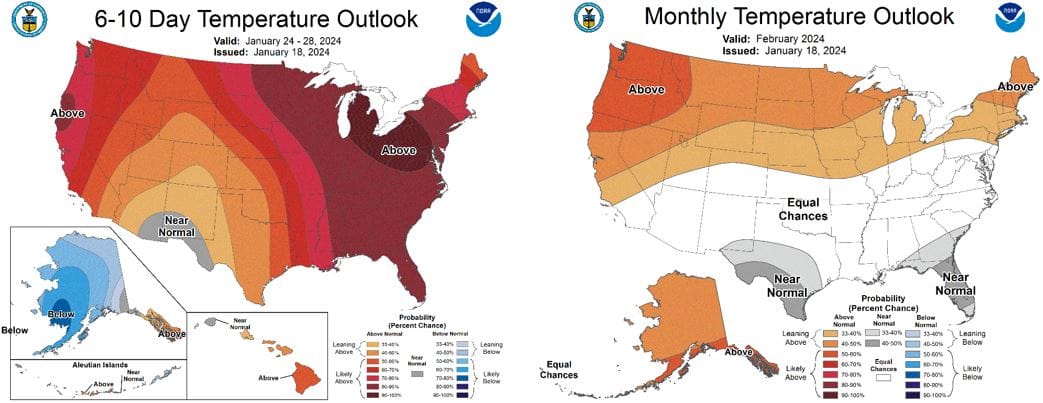

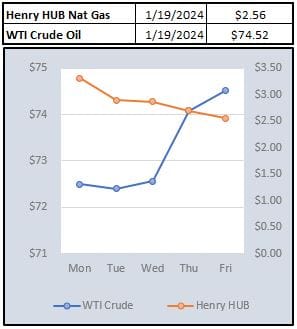

LMPs shot up as winter storms worthy of naming raced across the US and Canada. As shown below, the February contract of natural gas the last week, looks like the inverse of the thermometer. As impressive as these moves were, they imitate a small fraction of the moves some of the gas Citygate and pipeline zones saw. Interestingly enough, unlike electricity, natural gas isn't scheduled daily. The weekends are scheduled on Friday. In the case where a long holiday weekend falls on a Friday or Monday, three days need to be scheduled. This is what we saw last weekend as traders had to buy and schedule natural gas for the weekend and Monday all on Friday. Because of that, local spot prices were through the roof heading into the unknown of cold weather. For example, at the Chicago City gate, natural gas for Thursday Jan 11 was priced at $3.00/MMBtu and $3.76 on Tuesday. In between, the price for the full weekend and holiday cleared at $25.82/MMBtu. These moves aren't unusual, but can be exaggerated over long weekends as the fear of well freeze ups and pipeline constraints has buyers scrambling.

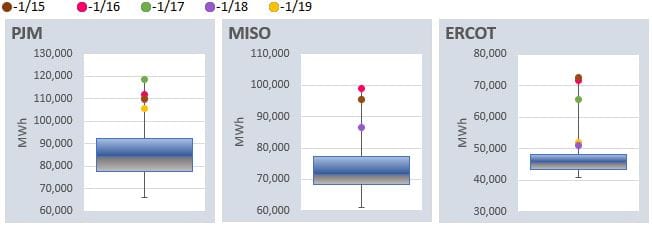

As timing would have it, FERC held its January meeting yesterday. As expected, there was plenty of discussion about the cold and storms of last week. Commissioners came out firing talking about the need for transmission and dispatchable generation. In his opening remarks, Chair Phillips pointed out that SPP imported more electricity this week compared to Winter Storm Uri. Commissioner Christie commented that PJM had their peak covered by 90% dispatchable generation while MISO was 75% with wind covering another 20%. The commissioner commended the RTO/ISOs on a job well done, with Chairman Phillips reminding all in attendance winter is only half over.

Wall St is about the head into what is affectionately, or with disdain, called silly season which happens four times a year....quarterly earnings reports. The last couple quarters, the buzzword du jour has been two letters- AI. AI, who cares?

The reality is the world of electricity needs to care and right fast! This is something we've been writing and speaking on over the last year, and if we may be so bold, any concern about EV load will become trivial compared to AI. AI means datacenters, and much more than the crypto data centers that have caused concern in the past.

This week, The Verge ran an article interviewing Meta CEO Mark Zuckerberg. In the article, there was plenty of mention about AI and Meta's goals. After talent, the hardest thing to get for running AI models is computing power. Enter Nvidia who's H100 GPUs are coveted as the industry choice for building AI models. According to an article in Tom's Hardware, a PC centric website, Paul Churnock, Principle Electric Engineer of Datacenter Technical Governance and Strategy at Microsoft, states that, 'one Nvidia H100 has the peak power consumption of 700W. At 61% annual utilization, it's equivalent to the power consumption of a household with 2.5 people.". As the article states, if Nvidia gets close to their goal of selling 2 million chips in 2024, the power consumption would rank as the 5th largest city in the US, slightly ahead of Phoenix and behind Houston. Let that sink in, the chips Nvidia is to sell this year would be the 5th largest city in power consumption in the US. Obviously, these chips will be replacing some legacy, but it's fair to say, AI is an electricity mega consumer by whatever metric you use.

Nvidia (NVDA) reports quarterly earnings near the end of February, which is toward the tail end of the company earnings release calendar. When they do, Wall St analyst will be looking for unit sales guidance for earnings calculations. We will be looking to see if they can surpass Houston.

NOAA WEATHER FORECAST

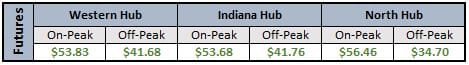

DAY-AHEAD LMP PRICING & SELECT FUTURES

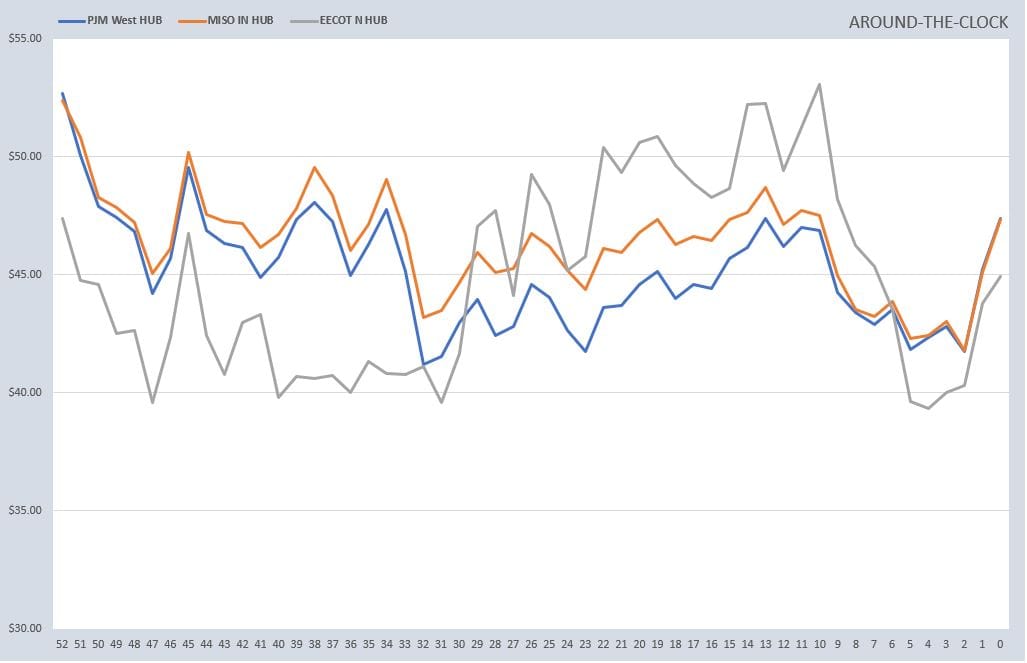

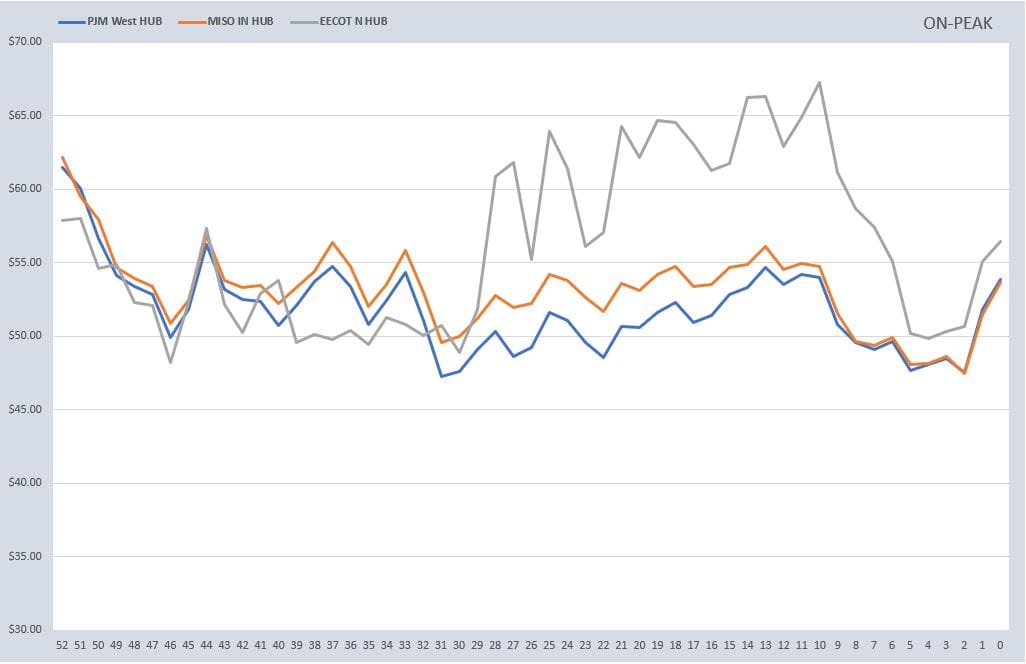

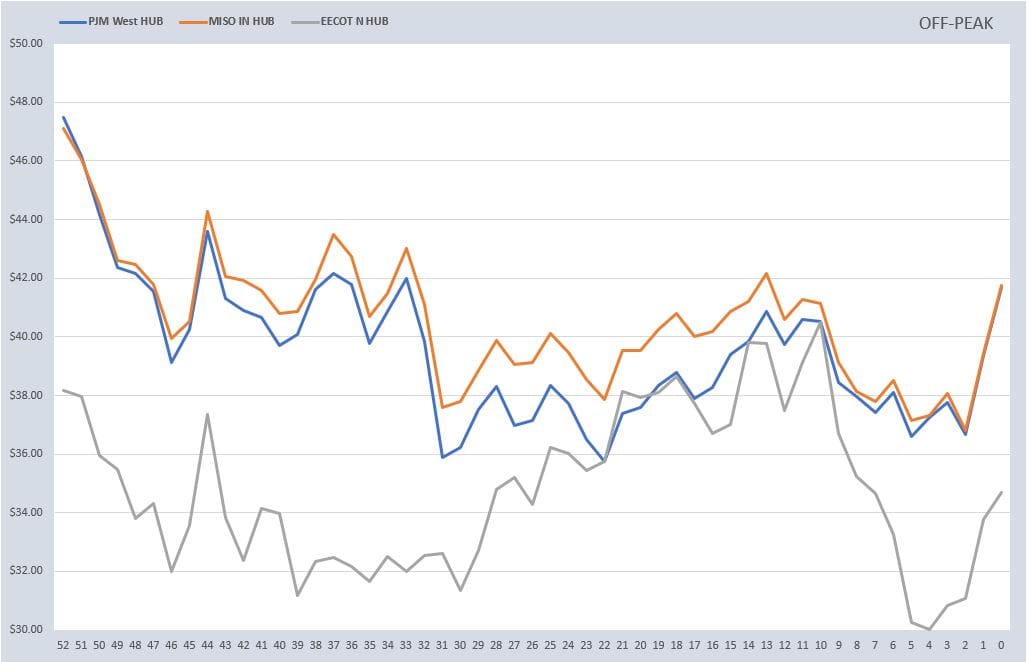

RTO AROUND THE CLOCK CALENDAR STRIP

DAILY RTO LOAD PROFILES

COMMODITIES PRICING

Not getting these updates delivered weekly into your inbox? Let's fix that, click the link below: